Edwin, one of the authors of this article, recently gave one of his poems to an AI chatbot with a simple instruction: “Write a reflection on the following poem.” The poem is about watching a bird on a branch and wondering what it knows of life and death. It ends like this:

Bird, you see further than I do, but

we both cannot see more than now,

as you join me in a stare that is life itself.

What came back was fluent, even impressive. The AI talked about “temporal consciousness” and “modes of being in the world”. But it felt like a reflection written by someone who had read everything about poetry and experienced none of it. The words were right. The understanding was missing.

That gap matters. It’s at the heart of a question every teacher who writes, plans, or creates with AI is now confronting: When a machine can produce polished text on demand, what is left for the human to do?

The answer is not that writing disappears. It’s that writing changes, and the most important new skill may be one few of us were taught – the art of iterative prompting.

Not a tool, not an author, but something in between

Here’s what AI writing platforms such as ChatGPT and Claude are not – fancy word processors. Here’s what they are – conversation partners.

Research has shown that generative AI changes writing in ways that go well beyond anything spellcheckers or grammar apps ever managed. Technology philosopher Don Ihde called the relationship between humans and their tools one of “alterity”, a kind of otherness we negotiate every time we pick up a device.

With generative AI, that negotiation has become a literal conversation. And for teachers, this changes everything.

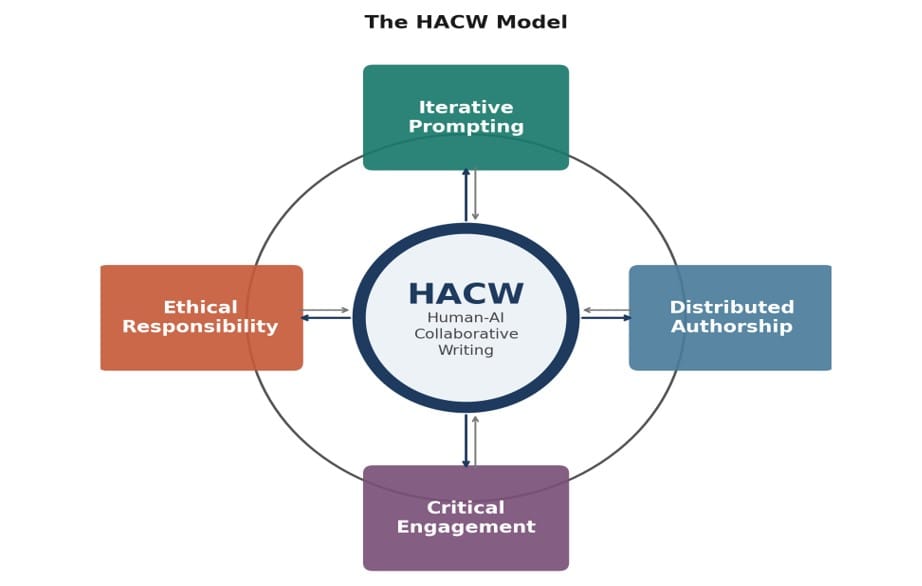

In a 2025 conference paper for the Australian Association for Educational Research (AARE), we proposed the Human-AI Collaborative Writing (HACW) model. Drawing on Karen Barad's idea of entanglement and Rosi Braidotti's posthuman thinking, the model frames writing with AI not as a person using a tool, but as a two way partnership. Both sides contribute. But the human keeps hold of responsibility, purpose and authentic voice. That last point is not negotiable.

The diagram shows the model in action. At its centre sits the partnership between humans and AI. Around it are four principles that make that partnership productive rather than passive:

Iterative prompting (refining your instructions through ongoing dialogue)

Distributed authorship (holding onto your voice when a co-writing with AI)

Critical engagement (questioning and evaluating everything the AI produces)

Ethical responsibility (ensuring the finished work reflects genuine intent).

The arrows run both ways. Each principle shapes the process, and the process reshapes each principle. This is not a checklist. It’s a way of thinking.

The prompt is the new first draft

Most people think of a prompt as a command – tell the machine what to do, get a result. The HACW model sees it differently.

As Mishra, Warr, and Islam have argued, prompt writing is an emerging literacy, one that demands clarity about purpose, audience and tone. For teachers, this is familiar territory. They already know how to pitch language for a specific context. The difference is that now the audience includes a machine.

But the real skill is not the first prompt. It’s the 10th. Iterative dialogue, the back and forth between writer and AI, is where collaborative writing comes alive.

Chang and Li call this “possibility thinking”: Each exchange opens directions the writer might not have imagined alone. You read the AI's response, challenge it, redirect it, or throw it out.

That is not outsourcing your thinking. That is a new form of it.

What teachers are actually doing

Research is catching up with what many teachers already know from experience. A study of 10 English language teachers in Australia by Barnes and Tour, published in Language and Education, found teachers were quietly using AI to create resources, write feedback and tailor materials, but treating it as a kind of “dirty little secret” for fear of professional judgment.

That finding cuts to the core of the model’s principle of ethical responsibility – teachers were grappling with the right questions about transparency and integrity, but had no shared language for the conversation.

Other research fills in the picture. Tour and Zadorozhnyy’s framework for prompt literacy, published in the Journal of Adolescent and Adult Literacy, gives teachers a structured way to practise iterative prompting using a model many educators already know.

And Creely's account of writing poetry with AI shows distributed authorship and critical engagement up close – the writer asserting voice, rejecting outputs that flattened meaning, and steering the creative direction across multiple rounds.

The challenge these studies pose is a pointed one. Teachers don’t need more technology. They need a coherent way of understanding what they are already doing.

Why this matters now

Generative AI is not coming to education. It’s already there. Teachers are writing with it, planning with it and creating resources with it every day. The question is no longer whether they’ll use it, but whether they’ll use it well, and the difference between those two things is enormous.

Consider what happens when the partnership goes wrong. AI produces text that sounds confident but gets the facts subtly wrong, and a time-pressed teacher passes it straight to a classroom. AI generates feedback on work that is technically polished but misses the point, and nobody catches it because the language was so smooth.

As Chan has argued, ensuring that professional work reflects genuine judgement must be a foundational principle. And as Bearman and colleagues point out, we urgently need to build the capacity to evaluate AI outputs rather than simply accepting them.

The European Commission's ethical guidelines on AI in education have emphasised the importance of recognising algorithmic bias. But guidelines sitting in a document are not the same as skills in a teacher's hands.

A major study by Microsoft Research found that people who placed high confidence in AI tools reported doing less critical thinking, not more. Further research has documented what researchers call “metacognitive laziness”, the tendency to copy and paste AI generated text without questioning it.

The convenience of a fluent answer on tap can quietly corrode the very habits of analysis and reflection that good teaching depends on. Without deliberate attention to how we interact with AI, the partnership does not stay a partnership. It becomes a dependency.

Where to from here?

The HACW model doesn’t treat AI as a shortcut. It rejects the idea that writing with AI is about efficiency alone, where speed and fluency replace thinking and decision-making.

Instead, it positions writing as an active, negotiated process in which the teacher remains central. That is the challenge and the opportunity.

For teachers, this means building real skills in iterative prompting and critical engagement, not because it’s a professional obligation, but because it will make their practice sharper and their materials better.

It means learning to model those practices for learners, so that the next generation inherits not just the technology, but the judgement to use it wisely.

And for writers of every kind? The lesson may be the one Edwin's poem was getting at all along. Genuine understanding doesn’t come from producing polished text. It comes from paying attention, from the quality of the exchange, from standing still long enough to notice what matters.

In writing with AI, the magic is not in the output. It’s in the conversation that gets you there.